Lab 02-2: Sarsa

Background: From MC Control to Temporal Difference

In MC Control, we learned Q-values by running complete episodes and computing returns. The update waited until the episode ended:

\[Q(s,a) \leftarrow Q(s,a) + \frac{1}{N(s,a)}(G_t - Q(s,a))\]where \(G_t = r_t + \gamma r_{t+1} + \gamma^2 r_{t+2} + \cdots\) is the full discounted return.

Temporal Difference (TD) methods improve on this by updating Q at every step using a bootstrapped estimate — we don’t need to wait for the episode to end. The 1-step Sarsa update replaces the full return \(G_t\) with a one-step estimate:

\[Q(s,a) \leftarrow Q(s,a) + \alpha \left[ r + \gamma Q(s', a') - Q(s,a) \right]\]The name SARSA comes from the five quantities used in each update: \((S, A, R, S', A')\).

| MC Control | 1-Step Sarsa | |

|---|---|---|

| Update target | Full return \(G_t\) (sum of all future rewards) | One-step estimate \(r + \gamma Q(s', a')\) |

| When to update | After the episode ends | After every step |

| Bias vs. variance | Unbiased but high variance | Lower variance but biased (bootstrapping) |

Starter Code

Use the MC Control demo as your starting point. You will adapt it to implement 1-step Sarsa.

Part 1: 1-Step Sarsa (40 pts)

Adapt the MC Control code to implement 1-step Sarsa. Here are the key changes you need to make:

- The

updatemethod should take a single transition(obs, act, reward, next_obs, next_act)instead of a full episode trajectory. Apply the TD update: \(Q(s,a) \leftarrow Q(s,a) + \alpha [r + \gamma Q(s', a') - Q(s,a)]\). - The training loop should call

policy.update(...)at every step inside the episode, not after it ends. Since TD methods bootstrap, they don’t need to wait for the episode to finish. - Learning rate replaces the incremental mean. Use a fixed \(\alpha = 0.01\) instead of \(1/N(s,a)\).

Here is the full training loop structure you should use:

reward_list = []

n_episodes = 20000

for i in tqdm(range(n_episodes)):

obs, _ = env.reset()

while True:

act = policy(obs)

next_obs, reward, done, truncated, _ = env.step(act)

next_act = policy(next_obs)

policy.update(obs, act, reward, next_obs, next_act)

if done or truncated:

break

obs = next_obs

policy.eps = max(0.01, policy.eps - 1.0/n_episodes)

mean_reward = np.mean([sum(run_episode(env, policy, False)[2])

for _ in range(2)])

reward_list.append(mean_reward)

policy.eps = 0.0

scores = [sum(run_episode(env, policy, False)[2]) for _ in range(100)]

print("Final score: {:.2f}".format(np.mean(scores)))

import pandas as pd

df = pd.DataFrame({'reward': reward_list})

df.to_csv("./data_lab2/SARSA-1.csv", index=False, header=True)

Note: Set

eps = 0when testing/evaluating and restore it for training.

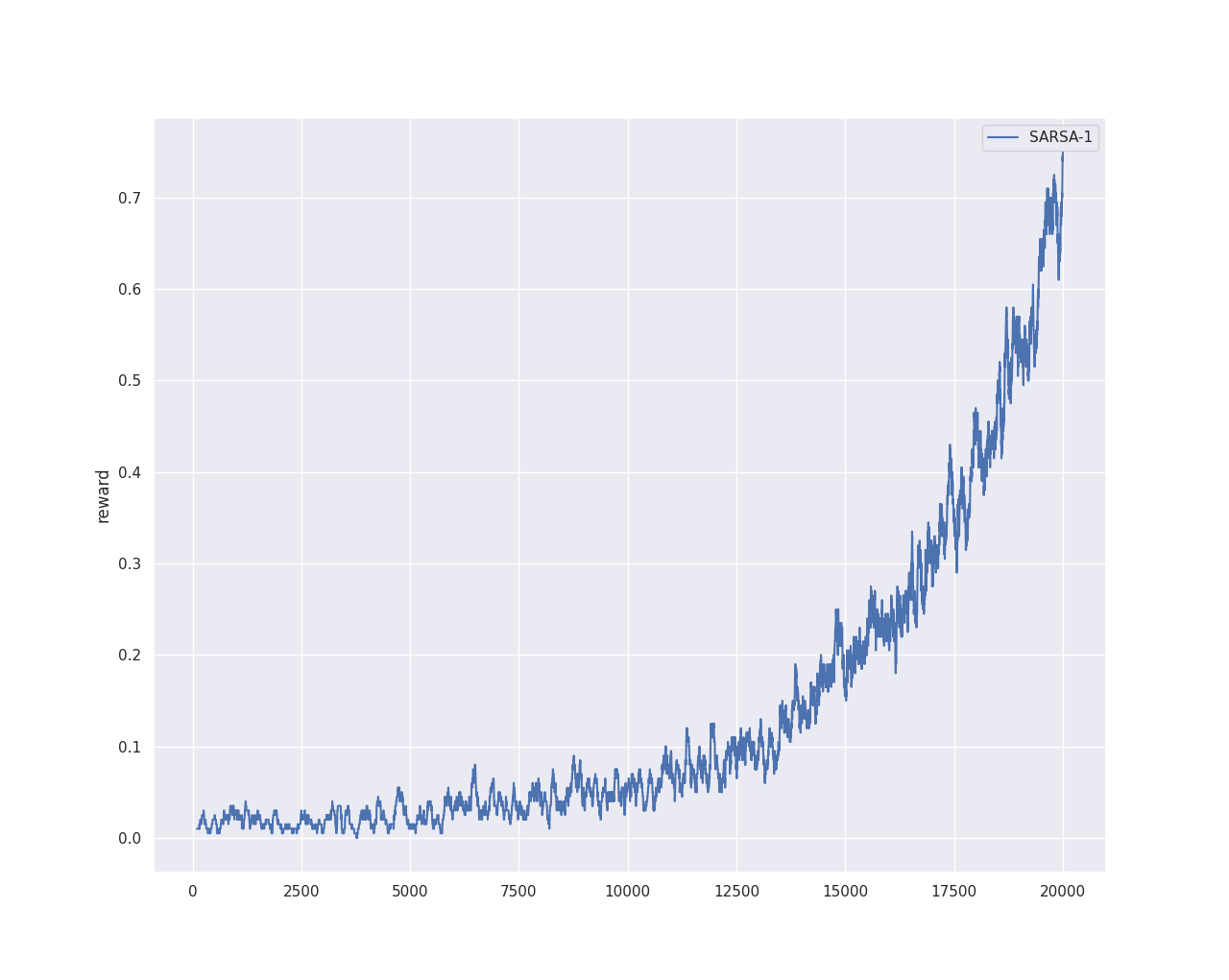

To plot the learning curve, use the plot.py utility (also available on the Lab Submission Guideline page):

python plot.py ./data_lab2 reward -s 500

The -s 500 applies a 500-point moving average for smoothing. Feel free to find a good smoothing parameter for clear visualization. A sample output:

Part 2: n-Step Sarsa (20 pts)

Concept

1-step Sarsa bootstraps after a single step. n-step Sarsa waits \(n\) steps before bootstrapping, using a longer return estimate:

\[G_t^{(n)} = r_t + \gamma r_{t+1} + \cdots + \gamma^{n-1} r_{t+n-1} + \gamma^n Q(s_{t+n}, a_{t+n})\] \[Q(s_t, a_t) \leftarrow Q(s_t, a_t) + \alpha \left[ G_t^{(n)} - Q(s_t, a_t) \right]\]This sits between 1-step Sarsa (\(n=1\)) and MC (\(n=\infty\)): larger \(n\) means less bias but more variance.

What to Implement

Implement 2-step and 5-step Sarsa. Save each to a separate CSV (SARSA-2.csv, SARSA-5.csv) and plot all learning curves together for comparison.

Code Skeleton

Here is the class structure to follow. You need to fill in the implementation for each method.

from collections import deque

class NStepSarsaPolicy:

def __init__(self, Q, eps, n_step, gamma=0.98, lr=0.01):

self.Q = Q

self.eps = eps

self.n_step = n_step

self.gamma = gamma

self.lr = lr

# Fixed-size buffer: automatically discards oldest when full

self.buffer = deque(maxlen=n_step)

def reset_buffer(self):

"""Call at the start of each episode."""

self.buffer.clear()

def update(self, obs, act, reward, next_obs, next_act, done):

# Push new transition; if buffer is already full, the oldest

# is automatically pushed out (deque with maxlen)

self.buffer.append((obs, act, reward))

# Normal case: buffer is full, not end of episode

if not done and len(self.buffer) == self.n_step:

# TODO: compute n-step return G from all n rewards in buffer

# G = r_0 + γr_1 + ... + γ^(n-1)r_{n-1} + γ^n * Q(next_obs, next_act)

# Update Q for the oldest (s, a) in buffer: buffer[0]

pass

# End of episode: process ALL remaining entries in the buffer

elif done:

# TODO: loop through every entry in the buffer (index 0, 1, ...).

# For each entry at index idx, compute the return from the

# rewards at buffer[idx], buffer[idx+1], ..., buffer[-1].

# No bootstrap (terminal state). Update Q for buffer[idx].

pass

def __call__(self, state):

if np.random.rand() < self.eps:

return np.random.choice(n_action)

return np.argmax(self.Q[state, :])

Key points to get right:

- Fixed-size buffer:

deque(maxlen=n)automatically discards the oldest entry when youappendpast capacity. This keeps the buffer always at size \(n\) during the episode — no manual popping needed. - Update every step: When the buffer is full and

done=False, compute the n-step return from the \(n\) rewards in the buffer, add the bootstrap term \(\gamma^n Q(s', a')\), and update Q for the oldest entrybuffer[0]. - End of episode — process ALL remaining entries: When

done=True, the buffer may have up to \(n\) unprocessed entries. You must loop through every entry in the buffer and update each one with a return computed from however many future rewards remain after it. No bootstrap (terminal state). - Why this matters for FrozenLake: The only non-zero reward (

reward=1) occurs at the very last step. That reward sits in the last buffer entry. If you only updatebuffer[0]when done, the states closer to the goal (atbuffer[1],buffer[2], …) never get credit for being near the goal — and learning degrades severely, especially for large \(n\).

Part 3: Sarsa(\(\lambda\)) (10 pts)

Read this tutorial first Sarsa(λ) Explanation. The material covers many advanced topics, but you only need to focus on the understanding and implementation of Sarsa(λ).

Concept

n-step Sarsa fixes a single lookahead depth \(n\), but which \(n\) is best? Sarsa(\(\lambda\)) avoids this choice by blending ALL n-step returns using an exponentially decaying weight. Instead of implementing multiple lookaheads, it uses eligibility traces — a simple bookkeeping trick that achieves the same effect.

The idea: maintain a trace \(E(s, a)\) for every state-action pair. The trace records how “recently and frequently” each \((s, a)\) was visited. When a TD error is available to update, ALL state-action pairs get updated in proportion to their trace.

The Algorithm

At each step, after observing \((s, a, r, s', a')\):

1. Compute the TD error (same as 1-step Sarsa):

\[\delta = r + \gamma Q(s', a') - Q(s, a)\]2. Update the eligibility trace for the current \((s, a)\):

\[E(s, a) \leftarrow E(s, a) + 1\](This is the “accumulating trace” variant — increment the trace for the visited state-action pair.)

3. Update ALL Q-values using the trace:

\[Q(s, a) \leftarrow Q(s, a) + \alpha \cdot \delta \cdot E(s, a) \quad \text{for all } (s, a)\]4. Decay ALL traces:

\[E(s, a) \leftarrow \gamma \lambda \cdot E(s, a) \quad \text{for all } (s, a)\]5. Reset traces at the start of each episode:

\[E(s, a) = 0 \quad \text{for all } (s, a)\]How \(\lambda\) Controls the Blend

- \(\lambda = 0\): Traces decay instantly. Only the current \((s, a)\) gets updated → equivalent to 1-step Sarsa.

- \(\lambda = 1\): Traces persist for the entire episode → approaches MC (every-visit).

- \(0 < \lambda < 1\): A smooth blend. Recent state-action pairs get larger updates, older ones get exponentially smaller updates.

Code Skeleton

class SarsaLambdaPolicy:

def __init__(self, Q, eps, lam=0.8, gamma=0.98, lr=0.01):

self.Q = Q

self.eps = eps

self.lam = lam # λ: trace decay parameter

self.gamma = gamma

self.lr = lr

# Eligibility traces: same shape as Q

self.E = np.zeros_like(Q)

def reset_traces(self):

"""Call at the start of each episode."""

# TODO: reset all traces to 0

def update(self, obs, act, reward, next_obs, next_act):

"""Apply the 4-step update described above:

1. Compute TD error δ

2. Increment trace for visited (s, a)

3. Update ALL Q-values proportional to their trace

4. Decay ALL traces by γλ

"""

# TODO: implement

def __call__(self, state):

if np.random.rand() < self.eps:

return np.random.choice(n_action)

return np.argmax(self.Q[state, :])

Hint: The update method is very similar to 1-step Sarsa — you just add two extra lines for the trace (increment and decay). The key insight is that the Q update applies to every state-action pair, but pairs with near-zero traces get negligible updates.

Training loop: The same as 1-step Sarsa, with one addition — call reset_traces() at the start of each episode.

Try \(\lambda = 0.8\) as a starting point. Save results to SARSA-lambda.csv and plot alongside your other curves.

Part 4: Questions

Answer the following in your report:

- (15 pts) Show the learning curves for 1-step, 2-step, 5-step Sarsa on one plot. Extra points for Sarsa(\(\lambda\)) on the plot.

- (10 pts) Compare Sarsa with different step sizes. What are the pros and cons of n-step Sarsa with a large \(n\)? What happens as \(n \to \infty\)?

- (5 pts) Compare Sarsa(\(\lambda\)) with n-step Sarsa. What advantage does the eligibility trace approach have over fixing a single \(n\)?

Deliverables and Rubrics

Submit a PDF exported from your Jupyter notebook that includes your code and answers.

- (70 pts) Working implementations:

- 1-step Sarsa: 35 pts

- n-step Sarsa (2-step and 5-step): 20 pts

- Sarsa(\(\lambda\)): 15 pts

- (30 pts) Answers to all questions in Part 4.